Shoes with a personality, reacting to your movements and telling your story via Google+.

Google Talking Shoe Case Study

For six months beginning in January 2013, I led all hardware development for the Google Talking Shoe project. Working with Zach Lieberman and YesYesNo, I developed all embedded sensor hardware, PCB design, firmware, and wireless data connectivity aspects for two iterations of the Shoes; for SXSWi and the Cannes Lions festival in 2013.

Zach and myself collaborated on the native Android application which would analyze raw sensor data streaming from the shoes to algorithmically identify various movements such as walking, jumping, kicking, sitting, and twirling. When an activity is detected, the shoe provides audio feedback in the form of a voice announcing pithy remarks such as "Try harder" or "My mother can jump higher than you". Upon ending a session, the user is then presented a 'badge' in the form of a GIF which they could post directly to Google+.

The goal of the Art, Copy, Code team at Google was to make a case for how wearable, connected objects can tell stories about our quantified physical lives. The project got almost too much attention, and Google had to issue a press release saying that no, they were not working with Adidas to create a shoe product: this was simply an experiment.

ROLE

Lead Hardware, Firmware, Mobile App Development

TECH

Bluetooth, Inertial Movement Sensors, FSR Sensors, RF Wireless, Audio Amplification, Native Android Application, Social Integration

TEAM

YesYesNo, 72andSunny, Google Art, Copy & Code

PRESS

Much. See the case study video.

YEAR

2013

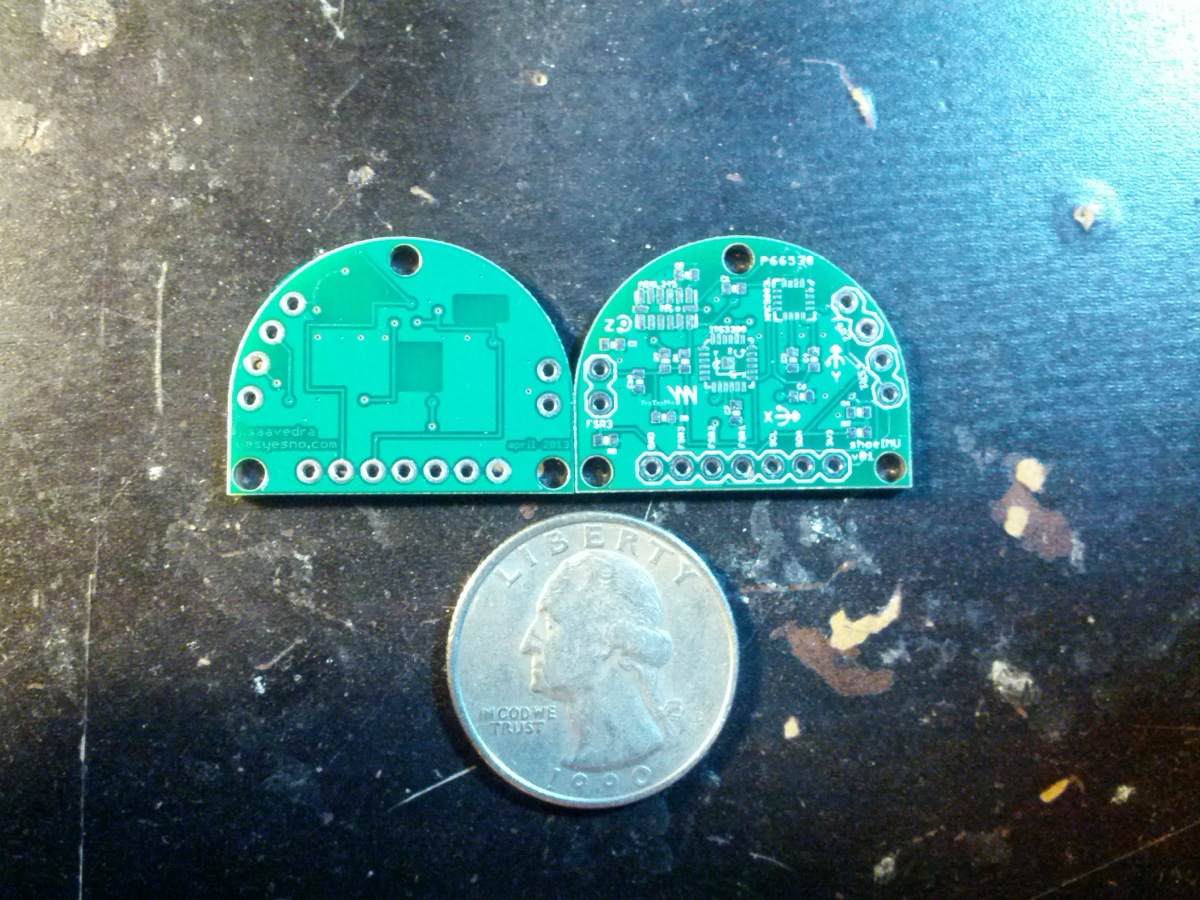

Shoe 2.0: The Communication Board lives in the tongue of the shoe and features the power management (LiPo recharge), a Bluetooth module (bottom side, running a speaker profile for audio throughput), 8 Ω speaker + amplification circuitry, an RF antenna for for communicating with the other foot, and a Molex connection to the shoeIMU sensor board embedded in the sole.

Shoe 2.0: The IMU/Sensor Board lives in the sole of each shoe. It contains a 9-axis IMU sensor (top right), header connections for 3 FSR pressure sensors at the toe, ball, and heel of the sole, and a molex terminal to connect to the CommBoard.